You Can't Secure What You Can't See: Why Shadow AI Is Your Biggest Blind Spot

Every enterprise CISO has lived through the shadow IT era. Unsanctioned SaaS apps proliferating across departments, sensitive data flowing into tools nobody vetted, and security teams playing an endless game of catch-up. That fight took a decade to bring under control.

Now it's happening again — faster, with higher stakes, and with tools that are far harder to detect.

Shadow AI is the unsanctioned use of AI services across your organization: developers piping proprietary code into ChatGPT, marketing teams drafting strategy docs in Claude, analysts uploading financial models to Perplexity. None of these tools went through procurement. None were risk-assessed. And most security stacks have no idea they exist on the network.

The numbers are staggering. According to Microsoft's 2024 Work Trend Index, 78% of AI users are bringing their own AI tools to work — without company provision or approval. Gartner's 2025 research puts it more bluntly: 69% of organizations know or suspect their employees are using prohibited generative AI tools. The question isn't whether shadow AI is happening — it's whether you have any visibility into it at all.

The Cost of Flying Blind

This isn't a theoretical risk. Cisco's 2025 Cybersecurity Readiness Index found that 46% of organizations have already experienced internal data leaks through generative AI. Cyberhaven's April 2025 research reveals that 34.8% of data employees put into AI tools is sensitive — up from just 10.7% two years ago. Source code. R&D materials. Customer data. Financial models. All flowing into tools your security team can't see.

The financial impact is just as concrete. IBM's 2025 Cost of a Data Breach Report found that shadow AI-associated breaches carry significant additional costs — and 63% of breached organizations lacked AI governance policies entirely.

Samsung learned this the hard way. In April 2023, engineers pasted proprietary source code, meeting transcripts, and chip test sequences into ChatGPT — three separate data leaks in twenty days. Samsung banned all generative AI tools company-wide. Apple, JPMorgan Chase, Goldman Sachs, and Deutsche Bank followed with their own restrictions. Blanket bans were the only tool they had, because they lacked the visibility to do anything more surgical.

Why Traditional Security Tools Miss Shadow AI

Most enterprise security architectures weren't built for this threat model.

CASB and SWG solutions rely on known application signatures. They maintain catalogs of sanctioned and unsanctioned SaaS apps, but the AI landscape is exploding — new providers launch weekly, existing ones spin up new API endpoints, and open-source models get hosted on ephemeral infrastructure. No static catalog keeps pace.

DLP tools inspect content but can't identify AI-specific risk. They'll catch a Social Security number in an email attachment, but they won't flag a developer sending your proprietary algorithm to an AI coding assistant for optimization. LayerX's 2025 research found that 77% of AI-using employees copy and paste data directly into chatbot queries — the kind of unstructured, context-dependent data that traditional DLP patterns miss entirely.

Network monitoring tools see traffic volumes, not intent. A spike in HTTPS traffic to api.openai.com looks the same as any other API call. And Cyberhaven found that 32.3% of ChatGPT usage happens through personal accounts, completely bypassing SSO, enterprise logging, and any controls you've put in place.

The result: most CISOs are operating with a massive blind spot. Cisco's 2025 report found that only 7% of organizations have reached maturity in AI security readiness. They've secured the front door while AI traffic flows freely through thousands of windows they don't know exist.

The Discovery Dilemma: Visibility vs. Disruption

Here's where it gets interesting — and where most vendors get it wrong.

The instinct is to intercept everything. Run all traffic through a TLS-terminating proxy, crack open every connection, inspect every payload. It's what we do for web security, so why not for AI?

Because it breaks things. Badly.

Modern AI providers deploy aggressive bot detection — Cloudflare shields, TLS fingerprinting, certificate pinning. When you MITM a connection to ChatGPT's web interface, you don't get inspection — you get a 1101 error and a frustrated employee who starts tethering to their phone to bypass your security stack entirely. You've traded a visibility problem for both a visibility problem and a trust problem.

This is the fundamental dilemma: you need to see shadow AI traffic to secure it, but the act of inspecting it can push usage underground.

The right approach separates discovery from enforcement. You don't need to decrypt a connection to know it's happening. The HTTPS CONNECT handshake and DNS resolution both reveal the destination domain in plaintext — before any encryption occurs. That's enough to answer the critical first question: what AI services are my people using?

A Two-Layer Architecture for Shadow AI Discovery

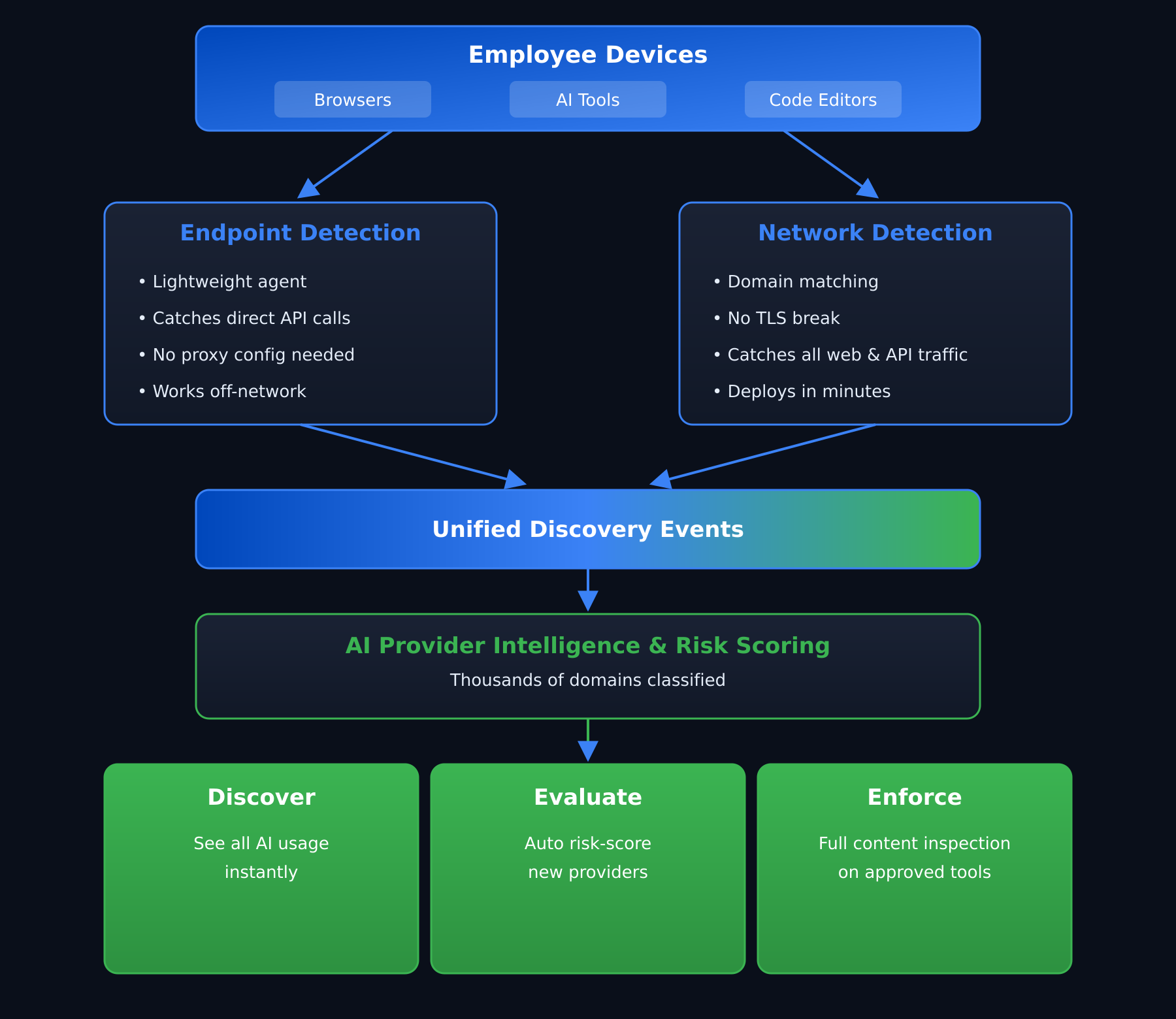

Effective shadow AI discovery requires detection at two network layers, each with different strengths:

Layer 1: Network-level domain detection. When traffic flows through your forward proxy, the CONNECT request reveals the target hostname. Match that against a comprehensive AI provider database — thousands of domains, not dozens — and you have real-time visibility into every AI service your organization touches. Crucially, for unknown or unvetted providers, you pass the traffic through without breaking TLS. No decryption, no certificate errors, no user disruption. Just a quiet discovery event logged with the domain, timestamp, and source.

Layer 2: Endpoint detection. Not all AI traffic routes through the proxy. Developers configure direct API calls. Browser extensions bypass proxy settings. Lightweight agents running on endpoints can monitor DNS resolutions — the moment a machine resolves an AI provider domain, you know. Privacy-preserving matching ensures the agent never holds a plaintext domain list while still enabling instant lookups against thousands of known AI domains.

Together, these layers provide comprehensive visibility without a single broken connection or user complaint.

From Discovery to Decision: What Comes After Visibility

Discovery is the foundation, not the destination. Once you have visibility into shadow AI usage, the real security work begins:

Risk-tier your discoveries automatically. Not all shadow AI is equal. An engineer using GitHub Copilot through their personal account is a different risk profile than someone uploading patient records to an unvetted AI startup. Automated risk scoring — based on the provider's security posture, compliance certifications, data handling policies, and jurisdiction — lets your team focus on what matters.

Establish a controlled path forward. The goal isn't to block all AI — that battle is already lost, and frankly, it's the wrong fight. McKinsey's 2024 survey found that 65% of organizations now regularly use generative AI, nearly double the rate from ten months prior. The goal is to channel AI usage through managed, policy-governed pathways. Approve vetted providers with appropriate guardrails: content inspection for sensitive data, policy enforcement for compliance requirements, and audit logging for governance.

Apply enforcement progressively. For your sanctioned providers — the handful your organization has vetted and approved — deploy full content inspection with real-time policy enforcement. Block prompt injections. Redact PII before it leaves your network. Enforce compliance policies per provider, per team, per use case. For everything else, maintain discovery-mode visibility while your security team evaluates and makes informed decisions.

This graduated model — discover everything, enforce on approved providers, evaluate the rest — respects both security requirements and business reality.

What CISOs Should Be Asking Right Now

Gartner predicts that by 2030, more than 40% of organizations will suffer security and compliance incidents caused by unauthorized AI tool usage. That window is narrowing. If you're evaluating your AI security posture, here are four questions worth asking your team — and your vendors:

1. How many AI providers can we detect today? If the answer is under 100, you're flying blind on the long tail.

2. Does discovery require TLS interception? If yes, you're trading visibility for disruption — and you'll miss traffic that bypasses the proxy entirely.

3. Can we discover AI usage at the endpoint, not just the network edge? Remote workers, VPN split-tunneling, and direct API calls all bypass network-only detection.

4. Is our enforcement model graduated or binary? Block-everything and allow-everything are both losing strategies. You need a spectrum: discover, evaluate, govern, enforce.

Shadow AI isn't a future problem. It's a today problem with a widening gap between what your employees are doing and what your security team can see. Closing that gap starts with discovery — the kind that works quietly, comprehensively, and without breaking the tools your people have already decided they need.

See what AI tools your organization is actually using. Start a free BlueAspen trial and get shadow AI visibility in minutes — no broken connections, no user disruption. Start Free Trial →